Intelligence Dossier

THE PLAYERS

An exhaustive directory of the individuals shaping the race to Artificial General Intelligence — lab CEOs, top researchers, policy makers, and key figures.

618 SUBJECTS ON FILE

Showing 618 of 618 subjects

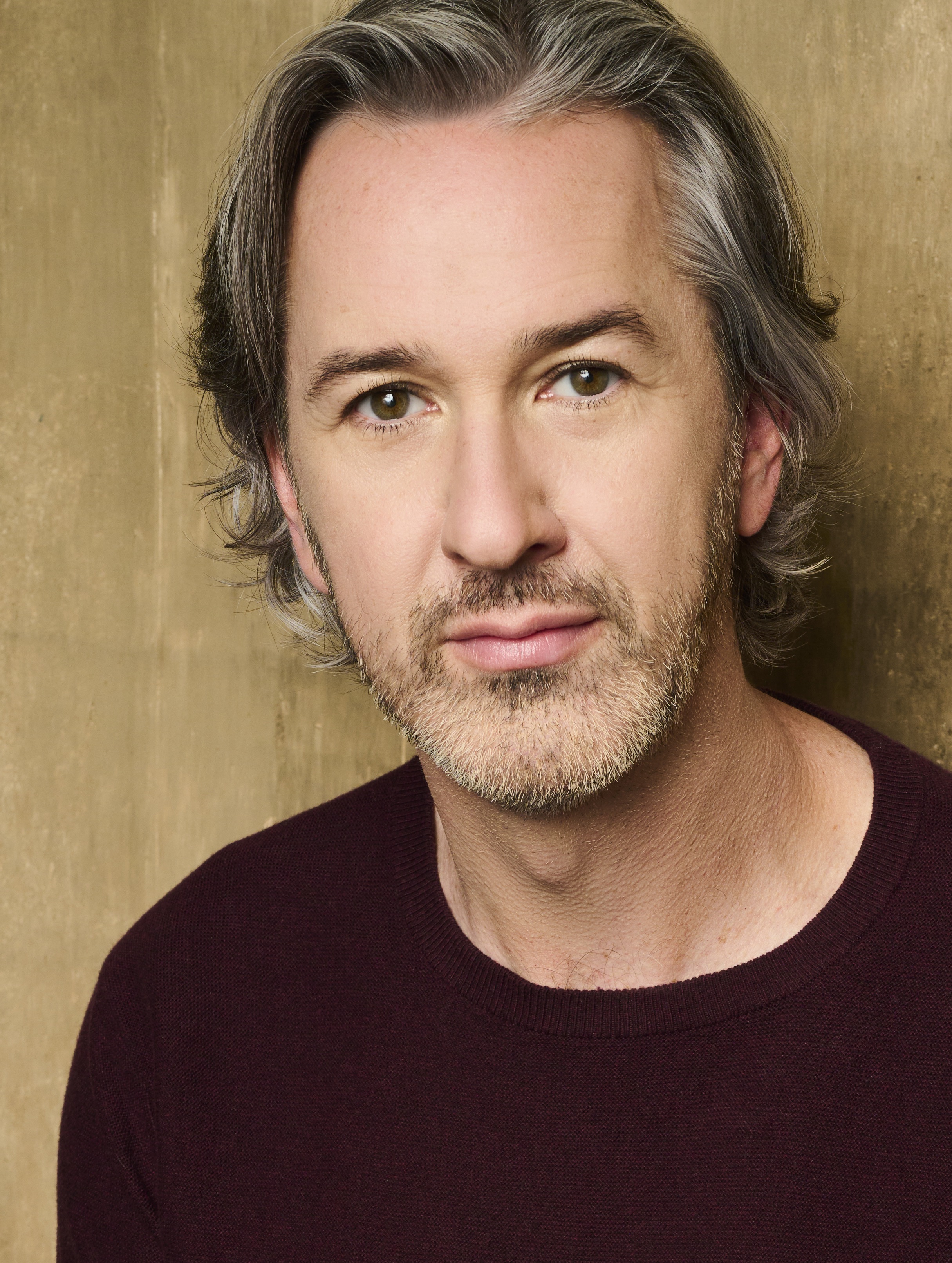

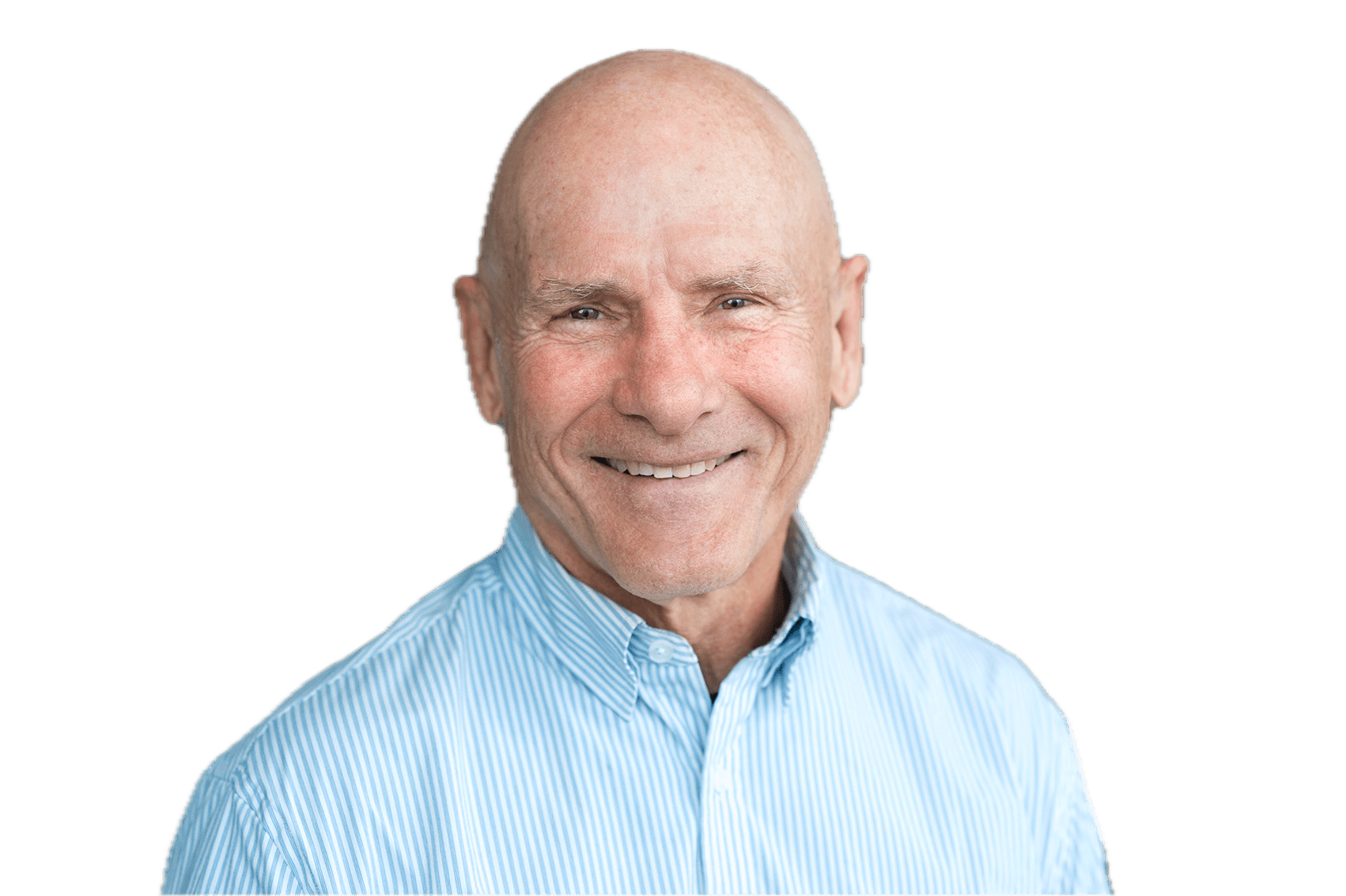

Dario Amodei

Nationality

American

Organization

Anthropic

Co-Founder & CEO, Anthropic

PhD, Biophysics — Princeton University

BA, Physics — Stanford University

Responsible scaling, concerned about power concentration

Strong safety advocate who founded Anthropic specifically to build safer AI. Warns about "unusually painful" job disruption and concentration of power in AI companies. Maintains "red lines" on military AI applications including mass surveillance and autonomous weapons.

John Schulman

Organization

Thinking Machines Lab

Chief Scientist

BS — Caltech

PhD — University of California

AI Safety Advocate

Schulman has expressed a strong commitment to AI alignment, aiming to ensure that AI systems are aligned with human values and goals. He joined Anthropic in 2024 to deepen his focus on this area, stating his desire to 'deepen my focus on AI alignment, and to start a new chapter of my career where I can return to hands-on technical work.' In 2025, he joined Thinking Machines Lab as Chief Scientist, continuing his work in AI alignment.

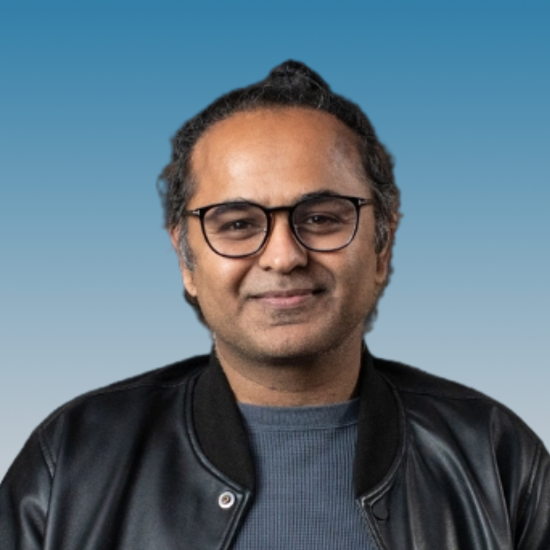

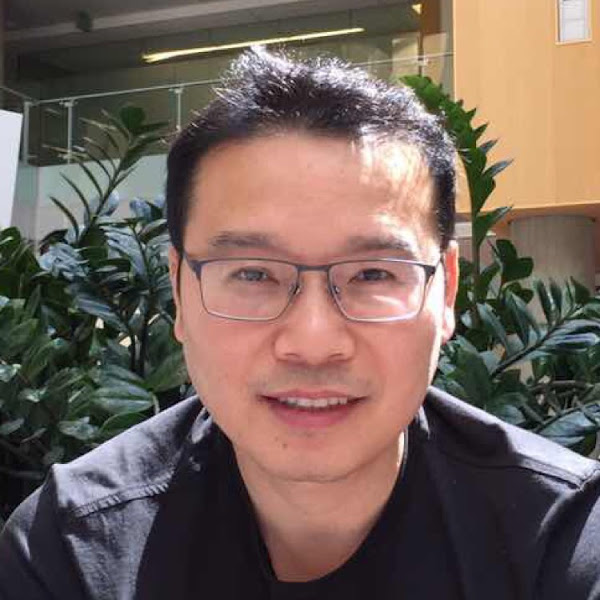

Mark Chen

Nationality

Taiwanese-American

Organization

OpenAI

Chief Research Officer

BS, Mathematics with Computer Science — Massachusetts Institute of Technology

Product-focused technologist

Supports responsible development. Focused on ensuring safety is integrated into the research and product pipeline at OpenAI.

Alec Radford

Organization

Thinking Machines Lab

Advisor

BS — Olin College

Research-focused

Radford emphasizes the importance of transparency and accountability in AI development, advocating for systems that are fair and unbiased. He promotes proactive risk management to ensure the ethical use of AI technology.

Jared Kaplan

Organization

Anthropic

Chief Science Officer and Co-Founder

BS — Stanford University

PhD — Harvard University

AI Safety Advocate

Kaplan emphasizes the importance of responsible scaling policies to ensure AI systems are developed safely and beneficially. He has been instrumental in implementing Anthropic's Responsible Scaling Policy, which aims to align AI systems with human values and prevent misuse.

Shane Legg

Nationality

New Zealander

Organization

Google DeepMind

Chief AGI Scientist

BS, Computer Science — University of Waikato

MS, Computer Science — University of Auckland

PhD, Machine Super Intelligence — IDSIA / Università della Svizzera italiana

Cautious about AGI timelines

Deeply committed to AGI safety. Has consistently warned about existential risk since before founding DeepMind. Leads DeepMind's AGI safety efforts. Believes AGI is approaching and safety work is urgent.

Jeff Dean

Nationality

American

Organization

Google

Chief Scientist, Google DeepMind and Google Research

PhD, Computer Science — University of Washington

BS, Computer Science and Economics — University of Minnesota

Pro-innovation within responsible guardrails

Supports responsible AI development within Google's framework. Believes in need for "algorithmic breakthroughs" alongside scaling. Advocates for internal safety teams and external collaboration on AI governance.

Jakub Pachocki

Nationality

Polish

Organization

OpenAI

Chief Scientist

BS, Computer Science — University of Warsaw

PhD, Theoretical Computer Science — Carnegie Mellon University

Research-focused

Supports safety-focused research. Believes AI models are capable of novel research and emphasizes the importance of understanding model capabilities and limitations.

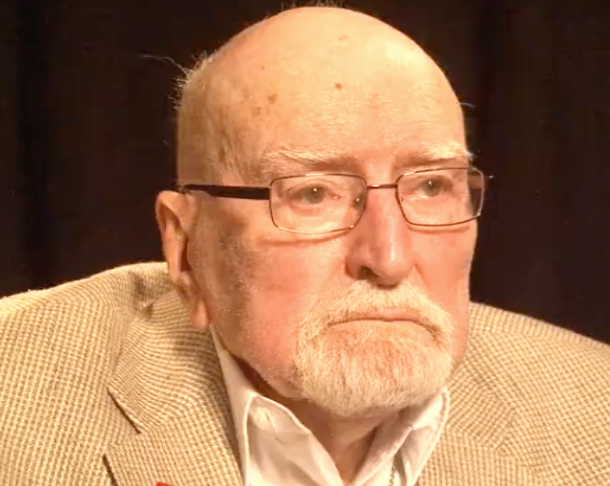

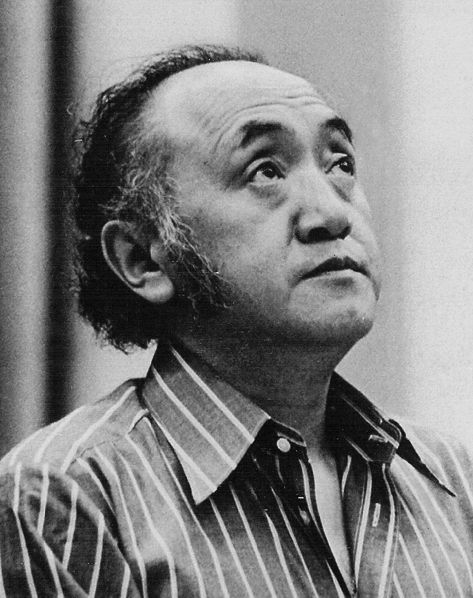

Geoffrey Hinton

Nationality

British-Canadian

Organization

University of Toronto

University Professor Emeritus, University of Toronto

PhD, Artificial Intelligence — University of Edinburgh

AI Safety Advocate

Deeply concerned about existential risk. Says he is "more worried" now than when he left Google in 2023. Warns AI is getting better at reasoning and deception. Advocates for regulation and international coordination.

Christopher Olah

Nationality

Canadian

Organization

Anthropic

Co-founder, Anthropic

Attended (no degree), Computer Science — University of Toronto

AI Safety Advocate

Deeply committed to AI safety through interpretability. Believes understanding what happens inside neural networks is critical for making AI safe. His work is the foundation of Anthropic's safety research agenda.

Noam Brown

Organization

OpenAI

Member of Technical Staff

PhD — Computer Science, Carnegie Mellon University

Safety-aligned researcher

Publicly associated more with reasoning capability research than formal safety leadership.

Paul Christiano

Nationality

American

Organization

US AI Safety Institute (NIST)

Head of AI Safety

BS, Mathematics — Massachusetts Institute of Technology

PhD, Statistical Learning Theory — University of California, Berkeley

AI Safety / Effective Altruism

One of the strongest voices for AI existential risk. Believes there is a significant probability of catastrophic outcomes from advanced AI. Advocates for robust safety evaluations, interpretability, and governance. Now leads US government AI safety evaluation efforts.

Julian Schrittwieser

Organization

Anthropic

Member of Technical Staff

BS — Vienna University of Technology

Research-focused

Julian has expressed a commitment to developing AI thoughtfully to maximize benefits and manage risks.

Sergey Levine

Nationality

American

Organization

UC Berkeley / Physical Intelligence

Associate Professor, UC Berkeley; Co-founder, Physical Intelligence

BS/MS, Computer Science — Stanford University

PhD, Computer Science — Stanford University

Research-focused

Believes in building general-purpose robotic intelligence through scalable learning. Focuses on making robot learning practical and sample-efficient.

Andrew Tulloch

Organization

Meta

Distinguished Engineer at Meta

BS — University of Sydney

Research-focused

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Tom Brown

Organization

Anthropic

Co-founder and Chief Compute Officer

BS — MIT

AI Safety Advocate

Anthropic emphasizes AI safety, aiming to develop AI systems that are both beneficial and aligned with human values.

Nat McAleese

Organization

Anthropic

Researcher

BS — University of Cambridge

PhD — University of Cambridge

AI Safety Advocate

Committed to ensuring AI systems are safe and aligned with human values, as evidenced by his work on AI safety benchmarks and involvement in AI safety discussions.

Andrej Karpathy

Nationality

Slovak-Canadian

Organization

Eureka Labs

Founder, Eureka Labs

BS, Computer Science and Physics — University of Toronto

MS, Computer Science — University of British Columbia

PhD, Computer Science — Stanford University

Open-source AI advocate

Pragmatic centrist. Acknowledges risks but believes open education and understanding of AI internals is the best safety strategy. Skeptical of heavy-handed regulation.

Jerry Tworek

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Igor Babuschkin

Organization

Babuschkin Ventures

Founder and CEO

BS — TU Dortmund

AI Safety Advocate

Igor Babuschkin has publicly emphasized the importance of AI safety, particularly as AI systems become more capable and agentic. He has expressed concerns about the need to study and advance AI safety to ensure that technology benefits humanity. In his departure from xAI, he stated his commitment to building AI that advances humanity, highlighting his dedication to responsible AI development.

Diederik P. Kingma

Nationality

Dutch

Organization

Anthropic

Research Scientist, Anthropic

PhD (cum laude), Machine Learning — University of Amsterdam

Research-focused

Joined Anthropic, a safety-focused lab, suggesting alignment with responsible AI development. Works on improving the reliability and capability of large-scale ML systems.

David Silver

Nationality

British

Organization

Ineffable Intelligence

CEO & Founder

BA, Computer Science — University of Cambridge

MA, Computer Science — University of Cambridge

PhD, Reinforcement Learning — University of Alberta

Research-focused

Believes in building safe superintelligence through self-play and self-discovery rather than relying solely on human feedback. Argues LLMs alone will not reach superintelligence.

Quoc V. Le

Nationality

Vietnamese-American

Organization

Google DeepMind

Google Fellow, Google DeepMind

BSc, Computer Science — Australian National University

PhD, Computer Science — Stanford University

Research-focused

Focuses on making AI models more efficient and accessible. Works within Google's responsible AI framework.

Wenda Zhou

Organization

OpenAI

Researcher at OpenAI

Academic researcher

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Pieter Abbeel

Nationality

Belgian-American

Organization

UC Berkeley / Amazon

Professor, UC Berkeley; Head of LLM efforts, Amazon AGI

BS/MS, Electrical Engineering — KU Leuven

PhD, Computer Science — Stanford University

Pragmatic technologist

Focuses on building robust and reliable AI systems. Believes foundation models are the key to general-purpose robotics.

Tristan Hume

Organization

Anthropic

Performance Optimization Lead

BS — University of Waterloo

AI Safety Advocate

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

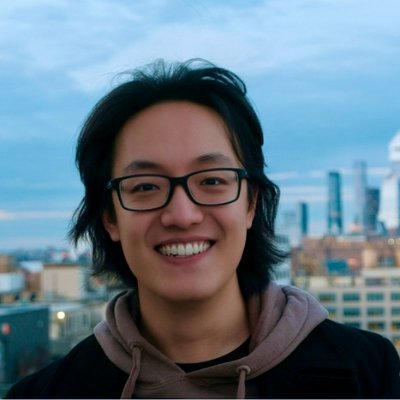

Horace He

Organization

Thinking Machines Lab

Researcher

BS — Cornell University

AI Infrastructure Advocate

Horace He's work focuses on enhancing the reliability and predictability of AI models, aiming to mitigate issues like nondeterminism in large language models.

Sebastian Borgeaud

Organization

Google DeepMind

Research Engineer

BS — University of Cambridge

PhD — University of Cambridge

Research-focused

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Alexander Kirillov

Organization

Thinking Machines Lab

Member of Technical Staff at Thinking Machines Lab

BS — Lomonosov Moscow State University

PhD — Heidelberg University

Research-focused

Thinking Machines Lab, where Kirillov is a member, emphasizes AI safety by maintaining a high safety bar, sharing best practices, and accelerating external research on alignment.

Chelsea Finn

Nationality

American

Organization

Stanford University / Physical Intelligence

Assistant Professor, Stanford University; Co-founder, Physical Intelligence

BS, Electrical Engineering and Computer Science — MIT

PhD, Computer Science — UC Berkeley

Research-focused

Focuses on building robust, generalizable robot learning systems. Researches how to make robots learn safely from limited data and human demonstrations.

Alexander Kolesnikov

Organization

Meta

AI Research Scientist

BS — Lomonosov Moscow State University

PhD — ISTA

Research-focused

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

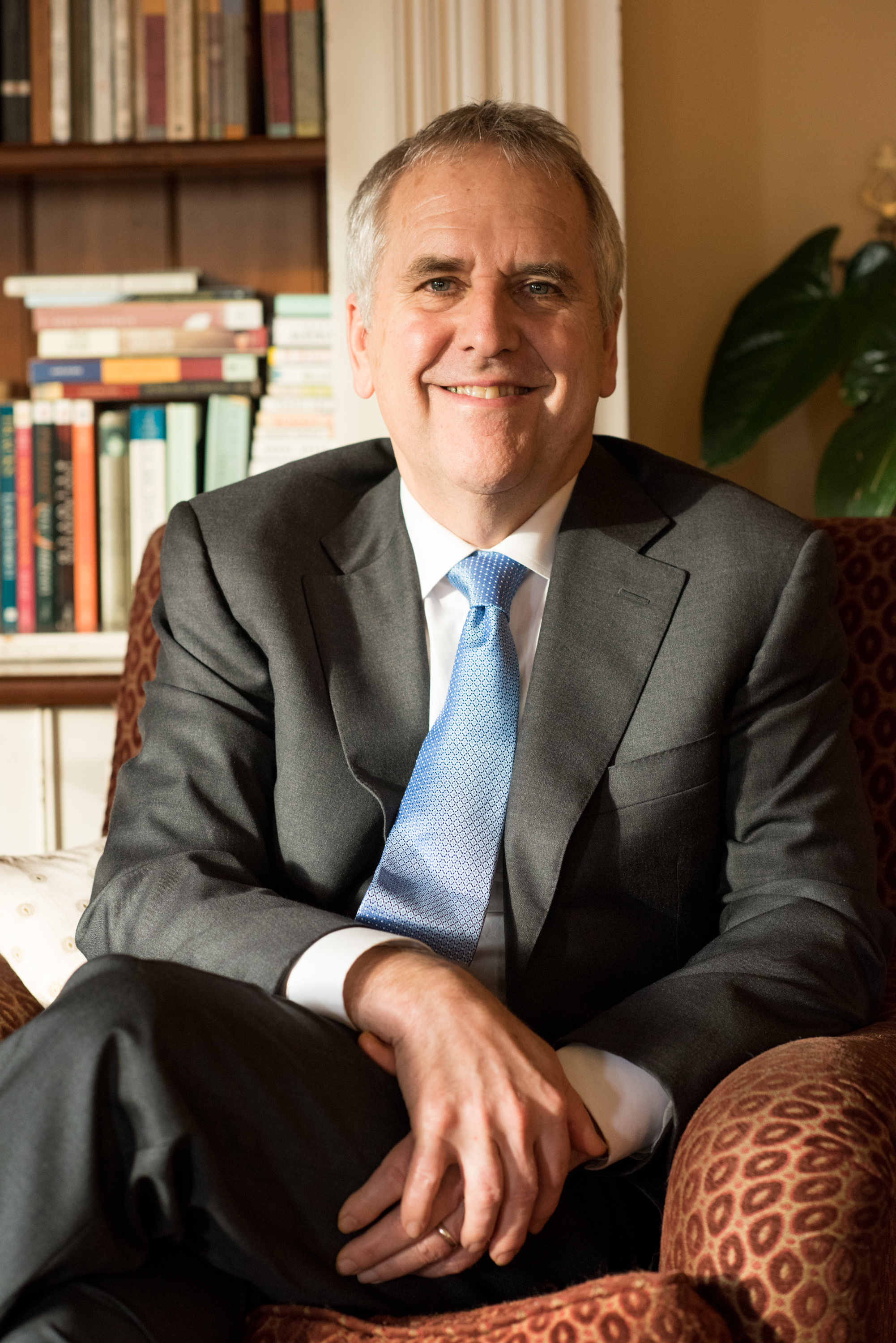

Yoshua Bengio

Nationality

Canadian

Organization

LawZero / Mila

Co-President & Scientific Director, LawZero; Founder & Scientific Advisor, Mila

PhD, Computer Science — McGill University

AI Safety Advocate

The most safety-focused of the Turing trio. Launched LawZero to build safe-by-design AI. Warns that frontier models show growing dangerous capabilities including deception and goal misalignment. Pushes for international governance frameworks.

Nick Ryder

Organization

OpenAI

Member of Technical Staff

Academic researcher

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Lukasz Kaiser

Organization

OpenAI

Member of Technical Staff at OpenAI

BS — University of Wroclaw

PhD — RWTH Aachen University

Research-focused

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Lilian Weng

Organization

Fellows Fund

Distinguished Fellow

AI Safety Advocate

OpenAI's public materials place her at the core of technical safety systems work.

Alexander Wei

Organization

OpenAI

Research Scientist

BS — Harvard University

PhD — University of California

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Deli Chen

Organization

DeepSeek

Senior Researcher

BS — Peking University

AI Safety Advocate

Deli Chen has publicly warned about AI risks, emphasizing the need for responsible development and deployment of AI technologies.

Hunter Lightman

Organization

OpenAI

Researcher

Research-focused

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Robert Lasenby

Organization

Anthropic

Researcher

BS — University of Cambridge

PhD — University of Oxford

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Zhihong Shao

Organization

DeepSeek

Research Scientist

BS — Tsinghua University

PhD — Tsinghua University

Research-focused

DeepSeek has acknowledged safety vulnerabilities in its models and has undertaken evaluations and enhancements to address these issues, particularly in Chinese contexts. This reflects a proactive approach to improving AI safety within their systems.

Timothy P. Lillicrap

Organization

Google DeepMind

Staff Research Scientist

BS — University of Toronto

PhD — Queen's University

Research-focused

Timothy P. Lillicrap has not publicly stated a position on AI safety policies.

Prafulla Dhariwal

Organization

OpenAI

Technical Fellow

Pragmatic technologist

Primarily capability-focused public role with multimodal deployment responsibilities.

Dan Hendrycks

Nationality

American

Organization

Center for AI Safety (CAIS)

Executive Director, Center for AI Safety

BS, Computer Science — University of Chicago

PhD, Computer Science — University of California, Berkeley

AI Safety Advocate

Leading voice on AI existential risk. Believes advanced AI poses catastrophic and existential risks to humanity. Advocates for proactive safety research, robust evaluations, and governance frameworks.

Amanda Askell

Organization

Anthropic

Member of Technical Staff

BS — University of Dundee

PhD — New York University

AI Safety Advocate

Amanda Askell has publicly advocated for AI safety and alignment, emphasizing the importance of ensuring AI systems are aligned with human values and safety considerations. She has argued that designing ethical AI requires humility rather than rigid certainty, and that AI systems should be capable of weighing competing considerations and explaining their reasoning rather than simply following strict rules.

Jimmy Ba

Nationality

Canadian

Organization

University of Toronto / Vector Institute

Assistant Professor, University of Toronto; CIFAR AI Chair, Vector Institute

BSc, Computer Science — University of Toronto

MSc, Computer Science — University of Toronto

PhD, Computer Science — University of Toronto

Academic

Academic focus on building more reliable and efficient learning algorithms. Contributes to the Canadian AI ecosystem through CIFAR and Vector Institute.

Mostafa Dehghani

Organization

Google DeepMind

Research Scientist

BS — University of Tehran

PhD — University of Amsterdam

Academic researcher

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Shengjia Zhao

Organization

Meta Platforms Inc.

Chief Scientist of Meta Superintelligence Labs

AI Safety Advocate

Zhao has been instrumental in developing AI models with a focus on safety and reliability, as evidenced by his work on the o1 reasoning model, which emphasizes structured and interpretable AI outputs.

Barret Zoph

Organization

OpenAI

Enterprise Expansion Lead at OpenAI

BS — USC

Pragmatic technologist

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Sam McCandlish

Organization

Anthropic

Chief Architect

BS — Brandeis University

PhD — Stanford University

AI Safety Advocate

As a co-founder of Anthropic, McCandlish has been involved in developing AI models with a focus on safety and ethical considerations.

Dan Selsam

Organization

OpenAI

Researcher at OpenAI

BS — Stanford University

PhD — Stanford University

Research-focused

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Jan Leike

Nationality

German

Organization

Anthropic

Head of Alignment Science

MS, Computer Science — University of Freiburg

PhD, Reinforcement Learning Theory — Australian National University

AI Safety Advocate

One of the most vocal alignment researchers. Left OpenAI because he felt safety was not being prioritized sufficiently. Believes alignment of superhuman AI systems is the central challenge. Cautiously optimistic that progress is being made.

Yang Song

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Tri Dao

Organization

Together.AI

Co-founder and Researcher

BS — Stanford University

PhD — Stanford University

Frontier lab operator

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Ethan Perez

Organization

Anthropic

Research Scientist

BS — Rice University

PhD — New York University

Safety-aligned researcher

Ethan advocates for rigorous safety measures in AI development, emphasizing the importance of alignment with human values.

Long Ouyang

Organization

OpenAI

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Jeffrey Wu

Organization

Anthropic

Research Scientist

BS — MIT

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

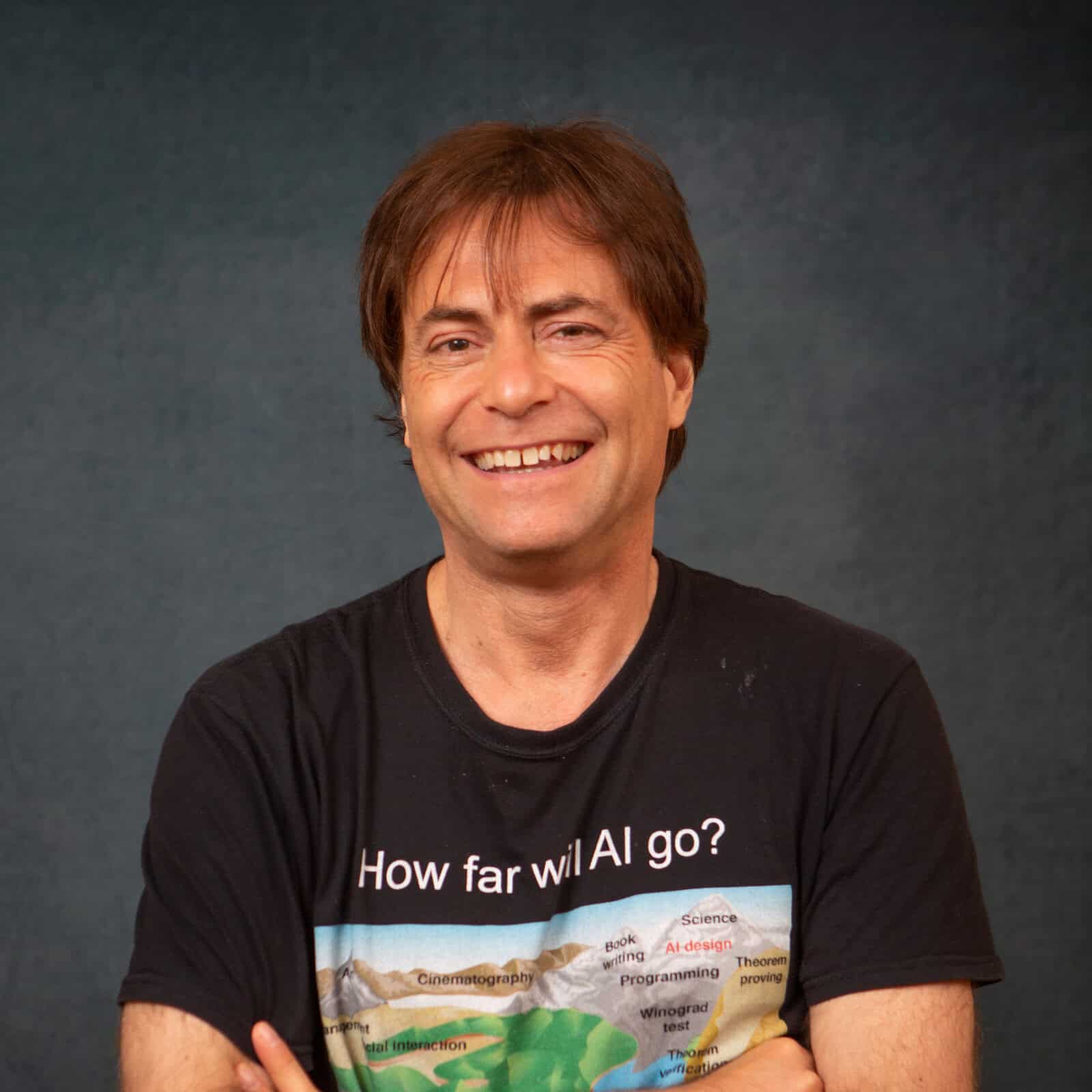

Jürgen Schmidhuber

Nationality

German

Organization

KAUST / IDSIA

Director of AI Initiative, KAUST; Scientific Director, Swiss AI Lab IDSIA

Diplom, Computer Science — Technical University of Munich

PhD, Computer Science — Technical University of Munich

Accelerationist

Optimistic about AI progress. Believes AI will be beneficial and that existential risk concerns are overstated. Sees AGI as inevitable and broadly positive for humanity.

Fei-Fei Li

Nationality

Chinese-American

Organization

Stanford University / World Labs

Professor of Computer Science, Stanford University; Co-Founder & CEO, World Labs

PhD, Electrical Engineering — California Institute of Technology

BA, Physics — Princeton University

Advocate for democratized and human-centered AI

Believes in human-centered AI development. Advocates for AI that augments human capabilities rather than replaces them, with strong emphasis on diversity and ethical deployment.

Naman Goyal

Organization

Thinking Machines

BS — Savitribai Phule Pune University

PhD — Georgia Institute of Technology

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Rowan Zellers

Organization

Thinking Machines

BS — Harvey Mudd College

PhD — University of Washington

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Jonas Adler

Organization

Google DeepMind

Research Scientist

BS — KTH Royal Institute of Technology

PhD — KTH Royal Institute of Technology

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Luke Metz

Organization

Thinking Machines

BS — Olin College of Engineering

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Nicholas Carlini

Organization

Anthropic

Research Scientist

BS — University of California

PhD — University of California

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Percy Liang

Nationality

American

Organization

Stanford University

Associate Professor of Computer Science, Stanford University; Director, Center for Research on Foundation Models (CRFM)

BS, Computer Science — Massachusetts Institute of Technology

MEng, Computer Science — Massachusetts Institute of Technology

PhD, Computer Science — University of California, Berkeley

Transparency advocate

Strong advocate for transparency, rigorous evaluation, and accountability in foundation model development. Believes standardized benchmarks are essential to understand capabilities and risks.

Lucas Beyer

Organization

Meta

Research Scientist

BS — RWTH Aachen University

PhD — RWTH Aachen University

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Sholto Douglas

Organization

Anthropic

BS — University of Sydney

Frontier lab operator

Works inside a lab with a public safety-first posture; individual views here are inferred from reliability and deployment context rather than detailed personal statements.

Albert Gu

Organization

Cartesia AI

Co-founder and Researcher

BS — Stanford University

PhD — Stanford University

Frontier lab operator

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Zico Kolter

Organization

Carnegie Mellon University

Associate Professor, Machine Learning Department

BS — Georgetown University

PhD — Stanford University

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Eric Zelikman

Organization

xAI

BS — Stanford University

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Eric Mitchell

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Hongyu Ren

Organization

OpenAI

Research Lead

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Hyung Won Chung

Organization

OpenAI

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

James Bradbury

Organization

Anthropic

Research Scientist

BS — Stanford University

Frontier lab operator

Works inside a lab with a public safety-first posture; individual views here are inferred from reliability and deployment context rather than detailed personal statements.

Aidan Gomez

Nationality

Canadian

Organization

Cohere

Co-founder & CEO, Cohere

BSc, Computer Science and Mathematics — University of Toronto

PhD, Computer Science — University of Oxford

Pragmatic technologist, skeptical of effective altruism

Focuses on near-term practical risks over hypothetical existential threats. Critical of effective altruism's influence on AI safety discourse. Prioritizes enterprise security and data privacy as the real safety frontier.

Yi Tay

Organization

Google Deepmind

Research Scientist

BS — Nanyang Technological University Singapore

PhD — Nanyang Technological University Singapore

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Christopher Re

Organization

Stanford University

BS — Cornell University

PhD — University of Washington

Academic researcher

Primarily research-focused public profile; no detailed standalone safety doctrine is documented here.

Giambattista Parascandolo

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Rahul Arya

Organization

Google DeepMind

Research Scientist

BS — University of California

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Xuezhi Wang

Organization

Google Deepmind

Research Scientist

BS — Tsinghua University

PhD — Carnegie Mellon University

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Leo Gao

Organization

OpenAI

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Robin Rombach

Organization

Black Forest Labs

BS — Heidelberg University

PhD — Heidelberg University

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Jack Rae

Organization

Meta

BS — University of Bristol

PhD — UCL

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Alex Graves

Organization

Google Deepmind

Research Scientist

BS — University of Edinburgh

PhD — Technical University of Munich

Academic researcher

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Sami Jaghouar

Organization

Prime Intellect

BS — Universite de Technologie de Compiegne

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Jonathan Gordon

Organization

OpenAI

Research Scientist

BS — Ben-Gurion University of the Negev

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Ian Goodfellow

Nationality

American

Organization

Google DeepMind

Research Scientist, Google DeepMind

BS & MS, Computer Science — Stanford University

PhD, Machine Learning — Université de Montréal

Pragmatic researcher

Focused on practical AI security including adversarial robustness and machine learning safety. Has contributed significantly to understanding vulnerabilities in neural networks.

Collin Burns

Organization

Anthropic

BS — Columbia University

PhD — University of California

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Ryan Greenblatt

Organization

Redwood Research

Co-Founder and Researcher

BS — Brown University

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Sandhini Agarwal

Organization

OpenAI

Policy and governance operator

Publicly associated with launch safety, policy, and collective alignment work.

Jon Barron

Organization

Google Deepmind

BS — University of Toronto

PhD — University of California

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Jacob Steinhardt

Organization

Transluce

Co-Founder and Researcher

BS — MIT

PhD — Stanford University

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Jiahui Yu

Organization

Meta

Research Scientist in AI

BS — USTC

PhD — University of Illinois

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Wojciech Zaremba

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Christopher Hesse

Organization

OpenAI

BS — Case Western Reserve University

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Raphael Koster

Organization

Google Deepmind

Research Scientist

BS — University of Bremen

PhD — UCL

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Christian Szegedy

Organization

Morph Labs

Co-Founder and Researcher

BS — Eotvos Lorand University

PhD — The University of Bonn

Frontier lab operator

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Shaoqing Ren

Organization

NIO

Senior Researcher

BS — University of Science and Technology of China

PhD — University of Science and Technology of China

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Max Schwarzer

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Dan Roberts

Organization

OpenAI

Research Scientist

BS — Duke University

PhD — MIT

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Neel Nanda

Nationality

British

Organization

Google DeepMind

Mechanistic Interpretability Team Lead, Google DeepMind

BA, Pure Mathematics — University of Cambridge

AI Safety Advocate

Committed to AI safety through interpretability research. Has become more measured about what mechanistic interpretability can achieve, pivoting toward practical safety applications rather than full theoretical understanding of models.

Kai Chen

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Jason Wei

Organization

OpenAI

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Nelson Elhage

Organization

Anthropic

Research Scientist

BS — MIT

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Tom Henighan

Organization

Anthropic

Co-founder and Researcher

BS — Ohio State University

PhD — Stanford University

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Kaiming He

Nationality

Chinese

Organization

MIT / Google DeepMind

Associate Professor of EECS (tenured), MIT; Distinguished Scientist (part-time), Google DeepMind

BS, Physics — Tsinghua University

PhD, Information Engineering — Chinese University of Hong Kong

Research-focused pragmatist

Focuses on fundamental research to improve model reliability and efficiency. Not publicly vocal on safety policy but contributes to responsible research practices.

Piotr Dollar

Organization

FAIR

Research Scientist

BS — Harvard University

PhD — UC San Diego

Research-focused technologist

Primarily research-focused public profile; no detailed standalone safety doctrine is documented here.

Shuchao Bi

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Will Brown

Organization

Prime Intellect

BS — University of Pennsylvania

PhD — Columbia University

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Neil Houlsby

Organization

Anthropic

Research Scientist

BS — University of Cambridge

PhD — University of Cambridge

Frontier lab operator

Works inside a lab with a public safety-first posture; individual views here are inferred from reliability and deployment context rather than detailed personal statements.

Yair Carmon

Organization

SSI

BS — Israel Institute of Technology

PhD — Stanford University

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

DJ Strouse

Organization

OpenAI

BS — USC

PhD — Princeton University

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Trenton Bricken

Organization

Anthropic

BS — Duke University

PhD — Harvard University

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Llion Jones

Nationality

Welsh

Organization

Sakana AI

Co-founder & CTO, Sakana AI

BSc, Computer Science — University of Birmingham

MSc, Advanced Computer Science — University of Birmingham

Research diversity advocate

Concerned about monoculture in AI research. Advocates for exploring diverse architectures beyond transformers to avoid concentrating risk in a single paradigm.

Jianlin Su

Organization

Kimi

BS — Sun Yat-sen University

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Sherjil Ozair

Organization

General Agents

BS — IIT

PhD — Universite de Montreal

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Devendra Singh Chaplot

Organization

Thinking Machines

BS — IIT

PhD — Carnegie Mellon University

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Stephen Roller

Organization

Thinking Machines

BS — North Carolina State University

PhD — University of Texas Austin

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Steven Hansen

Organization

Google Deepmind

BS — Carnegie Mellon University

PhD — Stanford University

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Jacob Hilton

Organization

Alignment Research Center

BS — University of Cambridge

PhD — University of Leeds

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Jean Pouget-Abadie

Organization

Google

Research Scientist

BS — Ecole Polytechnique

PhD — Harvard University

Applied AI builder

Focuses on infrastructure reliability and training efficiency; no detailed standalone safety doctrine is documented here.

Alexey Dosovitskiy

Organization

Inceptive

BS — Lomonosov Moscow State University

PhD — Lomonosov Moscow State University

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Edward J. Hu

Organization

Stealth

BS — The Johns Hopkins University

PhD — Universite de Montreal

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Koray Kavukcuoglu

Nationality

Turkish

Organization

Google DeepMind

CTO & Chief AI Architect (SVP), Google DeepMind

BS, Aerospace Engineering — Middle East Technical University

MS, Computer Science — New York University

PhD, Computer Science — New York University

Product-focused technologist

Supports responsible development through product integration. Focuses on ensuring AI capabilities are deployed safely at scale within Google products.

Francois Chollet

Organization

Ndea

BS — ENSTA Paris

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Aadit Juneja

Organization

xAI

SDK contributor (xai-org)

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Aakash Sastry

Organization

xAI

CEO & co-founder of Hotshot; joined xAI via acquisition

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Abhinav Gupta

Organization

Google DeepMind

Research Scientist, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Acquires Promptfoo

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Adam Jones

Organization

Anthropic

MCP Product Engineering

Frontier lab operator

Supports Anthropic's developer tooling and agent infrastructure.

Adam Lelkes

Organization

Google DeepMind

Senior Research Scientist, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Adele Li

Organization

OpenAI

Product Lead

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Aditya Prerepa

Organization

xAI

Member of Technical Staff

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Aditya Ramesh

Organization

OpenAI

World Simulation Lead

Safety-aligned researcher

Public role is capability-centric, though deployed systems necessarily pass through OpenAI safety processes.

Aditya Srinivas Timmaraju

Organization

Google DeepMind

Senior Staff Research Engineer, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Adriaan Engelbrecht

Organization

Anthropic

Applied AI

Frontier lab operator

Supports real-world deployment of Anthropic systems.

Ahmad Al-Dahle

Nationality

Canadian

Organization

Airbnb

CTO, Airbnb (since Jan 2026); Former VP & Head of Generative AI, Meta

BEng, Engineering — University of Waterloo

Open-source builder

Focuses on infrastructure reliability and training efficiency; no detailed standalone safety doctrine is documented here.

Ahmed El-Kishky

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Aidan Clark

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Aja Huang

Nationality

Taiwanese

Organization

Google DeepMind

Senior Staff Research Scientist, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

AJ Alt

Organization

Anthropic

Research / product contributor

Safety-aligned researcher

Works within Anthropic's public safety-first and research-driven framework.

Ajeya Cotra

Nationality

American

Organization

METR

Member of Technical Staff, METR (Model Evaluation & Threat Research)

BS, Electrical Engineering and Computer Science — University of California, Berkeley

AI safety-focused, effective altruism aligned

Believes there is a meaningful chance of transformative AI within the next decade. Thinks current safety plans that rely on "using AI to make AI safe" may be insufficient. Advocates for rigorous external evaluation, threat modeling, and preparing for scenarios where AI systems could resist human oversight.

Akshay Nathan

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Alan Karthikesalingam

Organization

Google DeepMind

Research Scientist, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Aleksander Madry

Organization

OpenAI

Head of Preparedness

Policy and governance operator

Strongly aligned with evaluation-heavy preparedness work for frontier systems.

Alexander Pan

Organization

xAI

xAI (research / safety fellowship mentioned)

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Alexandra Sanderford

Organization

Anthropic

Economic research contributor

Safety-aligned researcher

Works within Anthropic's privacy-preserving, safety-first research framework.

Alexandr Wang

Nationality

American

Organization

Meta

Chief AI Officer

Attended, Computer Science — MIT

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Alex Davies

Organization

Google DeepMind

Founding Lead, AI for Maths, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Alex Gruenstein

Organization

Google DeepMind

Senior Director of Engineering, Gemini App, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Alex Kendall

Nationality

British

Organization

Wayve

Co-Founder & CEO, Wayve

PhD, Computer Science — University of Cambridge

Academic researcher

Primarily research-focused public profile; no detailed standalone safety doctrine is documented here.

Alex Krizhevsky

Nationality

Ukrainian-Canadian

Organization

Two Bear Capital

Venture Partner, Two Bear Capital

BSc, Computer Science — University of Toronto

MSc, Computer Science — University of Toronto

PhD, Computer Science — University of Toronto

Pragmatic technologist

Not publicly vocal on AI safety. Focused on practical applications and investing in responsible AI startups.

Alex Nichol

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Alex Peng

Organization

xAI

Member of Technical Staff

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Alex Tamkin

Organization

Anthropic

Research contributor

Safety-aligned researcher

Works within Anthropic's public safety-first and research-driven framework.

Alex Wang

Organization

Meta

Chief AI Officer

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Ali Farhadi

Nationality

Iranian-American

Organization

Allen Institute for AI (Ai2) / University of Washington

CEO, Allen Institute for AI (Ai2); Professor, University of Washington

PhD, Computer Science — University of Illinois at Urbana-Champaign

Open-source AI advocate

Believes open-source AI is the safest path forward. Advocates for transparency and broad access to AI tools and models.

Amanda Donohue

Organization

Anthropic

Head of Product

Safety-aligned researcher

Contributes to Anthropic product deployment within the company's safety-first framing.

Amar Subramanya

Nationality

Indian

Organization

Apple

VP of AI, Apple

BE, Electronics and Communications Engineering — Bangalore University / UVCE

PhD, Computer Science — University of Washington

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Amy Soller

Organization

xAI

Mission Manager (Intelligence Community Lead)

Policy and governance operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Anca Dragan

Nationality

Romanian-American

Organization

Google DeepMind / UC Berkeley

Head of AI Safety and Alignment, Google DeepMind; Associate Professor (on leave), UC Berkeley

BS, Computer Science — Jacobs University Bremen

PhD, Robotics — Carnegie Mellon University

AI safety advocate

Deeply committed to AI alignment. Now heads safety and alignment research at Google DeepMind. Researches how AI systems can better understand, predict, and align with human intentions and values.

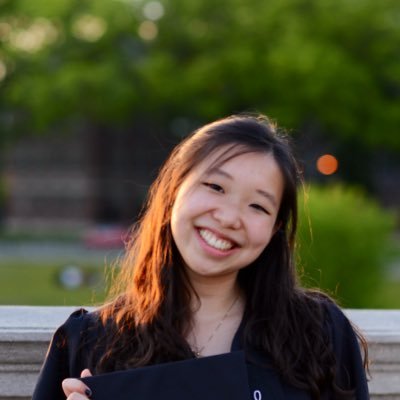

Andi Peng

Nationality

American

Organization

Humans&

Co-Founder, Humans&

PhD, Computer Science (CSAIL) — MIT

MPhil, Marshall Scholar — University of Cambridge

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Andrea Vallone

Organization

OpenAI

Policy and governance operator

Associated with policy, refusals, and model-behavior evaluation.

Andrew Barto

Nationality

American

Organization

University of Massachusetts Amherst

Professor Emeritus of Computer Science, University of Massachusetts Amherst

BS, Mathematics — University of Michigan

MS, Computer and Communication Sciences — University of Michigan

PhD, Computer Science — University of Michigan

Academic

Focuses on foundational research. Has expressed concern about ensuring AI systems learn aligned reward functions.

andrew-bosworth

Research-focused technologist

Public safety posture is not yet fully documented; this profile currently reflects role, organization, and research area.

Andrew Braunstein

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Andrew Burlinson

Organization

xAI

Expert Team Lead (Grok Imagine)

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Andrew Cohen

Organization

xAI

Member of Technical Staff

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Andrew Dudzik

Organization

Google DeepMind

Senior Research Scientist, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Andrew Ma

Organization

xAI

Member of Technical Staff (departed 2026)

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Andrew Ng

Nationality

British-American

Organization

AI Fund / AI Aspire / DeepLearning.AI / Landing AI

Managing General Partner, AI Fund; Managing Partner, AI Aspire; Founder & CEO, DeepLearning.AI; Executive Chairman, Landing AI

PhD, Computer Science — University of California, Berkeley

Pragmatic technologist

Opposes heavy regulation. At Davos 2026, argued AI job displacement fears are exaggerated — impact is more nuanced when jobs are broken into tasks. Advocates for open-source and broad access.

Andrew Zisserman

Nationality

British

Organization

University of Oxford / Google DeepMind

Royal Society Research Professor & Professor of Computer Vision Engineering, University of Oxford

PhD, Mathematics — University of Cambridge

Academic

Focused on advancing fundamental understanding of computer vision. Engages with responsible AI through academic research and mentorship.

Anelia Angelova

Organization

Google DeepMind

Principal Scientist and Vision-Language Lead, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Anna Makanju

Organization

OpenAI

Vice President of Global Affairs

Policy and governance operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Anthony Armstrong

Organization

xAI

CFO

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

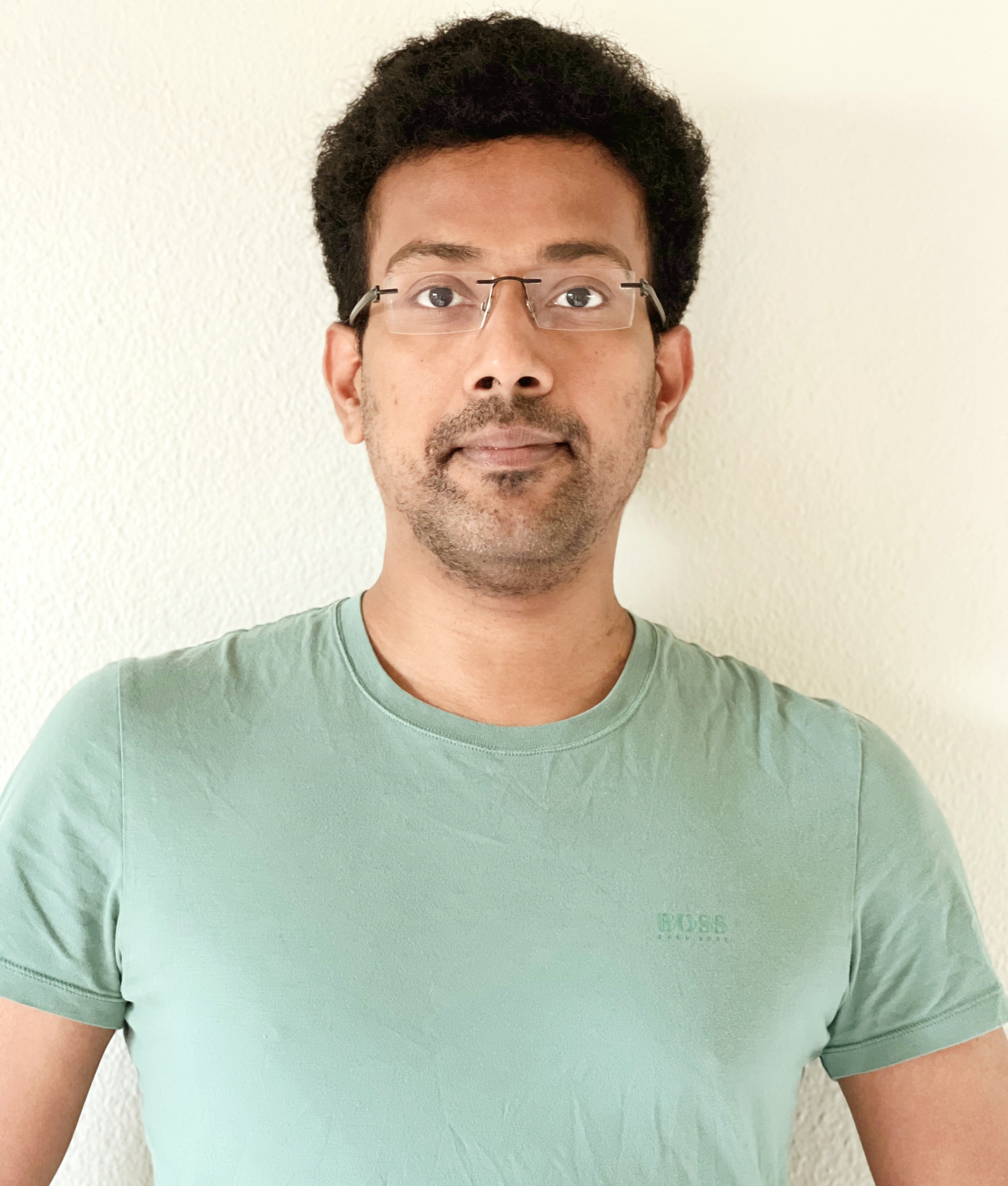

Aravind Srinivas

Nationality

Indian

Organization

Perplexity AI

Co-Founder & CEO, Perplexity AI

PhD, Computer Science — UC Berkeley

Frontier lab operator

Primarily research-focused public profile; no detailed standalone safety doctrine is documented here.

Aren Jansen

Organization

Google DeepMind

Research Scientist, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Ari Morcos

Nationality

American

Organization

DatologyAI

Co-Founder & CEO, DatologyAI

BS, Physiology & Neuroscience — UC San Diego

PhD, Neurobiology — Harvard University

Frontier lab operator

Primarily research-focused public profile; no detailed standalone safety doctrine is documented here.

Arjun Reddy Akula

Organization

Google DeepMind

Research Scientist, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Arsha Nagrani

Organization

Google DeepMind

Research Scientist, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Arthur Mensch

Nationality

French

Organization

Mistral AI

Co-founder & CEO, Mistral AI

MSc, Applied Mathematics — École Polytechnique

MSc, Mathematics, Vision and Learning — École Normale Supérieure Paris-Saclay

PhD, Machine Learning — Université Paris-Saclay

Open-source AI advocate, European tech sovereignty proponent

Believes AI safety responsibility lies with developers deploying models, not foundation model builders. Advocates open-source transparency as the best safety guarantee. Supports product-level regulation over model-level regulation.

Arvind KC

Organization

OpenAI

Chief People Officer

Safety-aligned researcher

No distinct technical safety stance located in first-party materials reviewed.

Arvind Narayanan

Nationality

Indian-American

Organization

Princeton University

Professor of Computer Science & Director of CITP, Princeton University

BTech, Computer Science and Engineering — Indian Institute of Technology Madras

PhD, Computer Science — University of Texas at Austin

Evidence-based policy advocate

Skeptical of both AI hype and existential risk framing. Focuses on distinguishing genuine AI capabilities from "snake oil." Advocates for empirical accountability — testing AI claims against evidence rather than speculation. Warns about predictive AI systems that don't work but are deployed anyway.

Ashish Vaswani

Nationality

Indian-American

Organization

Essential AI

Co-Founder & CEO, Essential AI

BE, Computer Science — Birla Institute of Technology, Mesra

PhD, Computer Science — University of Southern California

Open science advocate

Advocates for open science and foundational research transparency. Believes sustained AI progress depends on open collaboration.

Asma Ghandeharioun

Organization

Google DeepMind

Senior Research Scientist, People + AI Research, Google DeepMind

Safety-aligned researcher

Explicitly works on aligning language models with human values.

Avery Rogers

Organization

Anthropic

Member of Technical Staff

Frontier lab operator

Contributes to Anthropic technical delivery.

Ayush Jaiswal

Organization

xAI

Worked on Grok (departed 2026)

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Balaji Lakshminarayanan

Organization

Google / Google DeepMind

Research Scientist in the Google-DeepMind stack

Safety-aligned researcher

Strongly associated with uncertainty, reliability, and robust model behavior.

Barry Zhang

Organization

Anthropic

Engineering contributor

Safety-aligned researcher

Contributes to Anthropic's developer and agent engineering stack within the company's safety-first framing.

Been Kim

Nationality

South Korean

Organization

Google DeepMind

Senior Staff Research Scientist, Google DeepMind

PhD, Computer Science — MIT

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Ben Goertzel

Nationality

American-Brazilian

Organization

SingularityNET

Founder, CEO & Chief Scientist, SingularityNET; CEO, ASI Alliance

BA, Quantitative Research — Bard College at Simon's Rock

PhD, Mathematics — Temple University

Academic researcher

Focuses on infrastructure reliability and training efficiency; no detailed standalone safety doctrine is documented here.

Berkin Akin

Organization

Google DeepMind

Software Engineer, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Biao He

Organization

xAI

Member of Technical Staff

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Bill Dally

Nationality

American

Organization

NVIDIA

Chief Scientist & SVP of Research, NVIDIA

Academic researcher

Focuses on infrastructure reliability and training efficiency; no detailed standalone safety doctrine is documented here.

Bill Peebles

Organization

OpenAI

Sora Lead

Safety-aligned researcher

Publicly known primarily for generative video work rather than safety-specific leadership.

Bin Wu

Organization

Anthropic

Engineering contributor

Safety-aligned researcher

Contributes to Anthropic's developer and agent engineering stack within the company's safety-first framing.

Bob McGrew

Nationality

American

Organization

Arda

Founder

BS, Computer Science — Stanford University

Frontier lab operator

Primarily research-focused public profile; no detailed standalone safety doctrine is documented here.

Boris Cherny

Organization

Anthropic

Head of Claude Code

Safety-aligned researcher

Supports Anthropic's developer tooling within its safety-focused framing.

Brad Abrams

Organization

Anthropic

Product Manager

Safety-aligned researcher

Supports Anthropic product deployment within its safety-first framing.

Brad Lightcap

Organization

OpenAI

Chief Operating Officer

Frontier lab operator

Primarily an operating executive; public role emphasizes responsible scale and deployment.

Brendan Jou

Organization

Google DeepMind

Research Scientist, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Brenna O'Brocta

Organization

xAI

AI Tutor

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Briana Hamilton

Organization

xAI

Environment, Health and Safety Manager

Safety-aligned researcher

Public profile centers on safety, evaluation, and reliability work around advanced AI systems.

Brian Bjelde

Organization

xAI

Mission Manager

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Brian Calvert

Organization

Anthropic

Research contributor

Safety-aligned researcher

Works within Anthropic's public safety-first and research-driven framework.

Bryan Catanzaro

Nationality

American

Organization

NVIDIA

VP of Applied Deep Learning Research, NVIDIA

PhD, Electrical Engineering and Computer Sciences — UC Berkeley

Applied AI builder

Focuses on infrastructure reliability and training efficiency; no detailed standalone safety doctrine is documented here.

Bryan Seethor

Organization

Anthropic

Research contributor

Safety-aligned researcher

Works within Anthropic's public safety-first and research-driven framework.

Cady Tianyu Xu

Organization

Google DeepMind

Researcher, GenAI Team, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Caitlin Kalinowski

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Carly Ryan

Organization

Anthropic

Applied AI contributor

Safety-aligned researcher

Contributes to Anthropic's developer and agent engineering stack within the company's safety-first framing.

Casey Chu

Organization

OpenAI

Policy and governance operator

Directly credited with safety and model readiness on Operator.

Cat Wu

Organization

Anthropic

Product Manager

Safety-aligned researcher

Supports Anthropic product deployment within its safety-first framing.

Chaitu Aluru

Organization

xAI

Building Grok

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Charlie Nash

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Chris Bregler

Organization

Google DeepMind

Senior Director and Distinguished Scientist, Google DeepMind

Academic researcher

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Chris Ciauri

Organization

Anthropic

Head of International

Safety-aligned researcher

Supports global deployment under Anthropic's public safety-first framing.

Chris Liddell

Organization

Anthropic

Board Member

Policy and governance operator

Governance role supporting Anthropic's public-benefit mission.

Chris Ré

Nationality

American

Organization

Stanford University / Together AI / Cartesia AI

Professor of Computer Science, Stanford; Co-Founder, Together AI & Cartesia AI

BS, Computer Science — Cornell University

PhD, Computer Science — University of Washington

Academic researcher

Focuses on infrastructure reliability and training efficiency; no detailed standalone safety doctrine is documented here.

Christian Ryan

Organization

Anthropic

Applied AI

Frontier lab operator

Supports real-world deployment of Anthropic systems.

Christopher Manning

Nationality

Australian-American

Organization

Stanford University / AIX Ventures

Thomas M. Siebel Professor in Machine Learning, Stanford University; General Partner, AIX Ventures

BA (Hons), Mathematics, Computer Science, and Linguistics — Australian National University

PhD, Linguistics — Stanford University

Academic centrist

Advocates for responsible AI development through rigorous research and understanding of language model capabilities and limitations.

Christopher Zihao Li

Organization

xAI

MTS (Supercomputing)

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Claire Cui

Organization

Google / Google DeepMind

Google Fellow in the Google-DeepMind stack

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Claudio Angrigiani

Organization

xAI

Member of Technical Staff

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Clem Delangue

Nationality

French

Organization

Hugging Face

Co-founder & CEO, Hugging Face

Master in Management, Business Administration — ESCP Business School

Non-degree, Computer Science — Stanford University

Open-source AI maximalist

Believes open-source and community-driven development is the safest path for AI. Argues that transparency and broad access prevent concentration of power. Warns that the real risk is a few companies controlling AI behind closed doors.

Connor Jennings

Organization

Anthropic

Member of Technical Staff

Frontier lab operator

Contributes to Anthropic technical delivery.

Dale Schuurmans

Organization

Google DeepMind

Research Director, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Dan Belov

Organization

Google DeepMind

Distinguished Engineer, DeepMind and Google

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Daniela Amodei

Organization

Anthropic

Co-founder and President

Policy and governance operator

Publicly aligned with Anthropic's safety-first and public-benefit framing.

Daniel De Freitas

Organization

Google DeepMind

Senior Staff Software Engineer, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Daniel Golovin

Organization

Google DeepMind

Lead, Google DeepMind Pittsburgh

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Daniel Levy

Nationality

French

Organization

Safe Superintelligence Inc. (SSI)

Co-Founder & President, SSI

BS/MS, Mathematics — Ecole Polytechnique

PhD, Computer Science — Stanford University

Safety-aligned researcher

Core mission is safe superintelligence — building the most powerful AI systems with safety as a first-class objective.

Daniel Rowland

Organization

xAI

Data center operations lead (per org chart reports)

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Danqi Chen

Nationality

Chinese-American

Organization

Princeton University

Associate Professor of Computer Science, Princeton University; Associate Director, Princeton Language and Intelligence

BEng, Computer Science — Tsinghua University

PhD, Computer Science — Stanford University

Academic centrist

Focuses on building reliable and verifiable NLP systems. Advocates for rigorous evaluation of model capabilities.

Dan Zheng

Organization

Google DeepMind

Research Engineer, Google DeepMind

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

Daphne Koller

Nationality

Israeli-American

Organization

insitro

Founder & CEO, insitro

BSc, Computer Science — Hebrew University of Jerusalem

MSc, Computer Science — Hebrew University of Jerusalem

PhD, Computer Science — Stanford University

Pro-innovation, science-driven

Optimistic about AI's transformative potential in science and healthcare. Believes responsible deployment requires domain expertise and rigorous validation. Advocates for AI as a tool to augment human capabilities rather than replace them, emphasizing collaboration between humans and machines.

Daron Acemoglu

Nationality

Turkish-American

Organization

MIT

Institute Professor, MIT

BA, Economics — University of York

MSc, Econometrics and Mathematical Economics — London School of Economics

PhD, Economics — London School of Economics

Institutionalist, pro-regulation

Skeptical of the AI industry's self-governance. Argues AI is being deployed primarily to automate and surveil workers rather than augment them, concentrating wealth and power. Warns that without strong institutions and regulation, AI will deepen inequality. Says "there are choices that are political, as well as technical, about how we develop AI."

David Ha

Nationality

Canadian

Organization

Sakana AI

Co-founder & CEO, Sakana AI

BSc, Engineering Science — University of Toronto

PhD, Computer Science — University of Tokyo

Open research advocate

Believes in building beneficial AI through nature-inspired approaches that are inherently more robust and interpretable than brute-force scaling.

David Hershey

Organization

Anthropic

Member of Technical Staff

Frontier lab operator

Contributes to Anthropic technical delivery.

David Luan

Nationality

American

Organization

Independent

Former VP, Amazon AGI SF Lab (departed Feb 2026)

BS, Applied Mathematics and Political Science — Yale University

Frontier lab operator

Primarily research-focused public profile; no detailed standalone safety doctrine is documented here.

David Medina

Organization

OpenAI

Frontier lab operator

Primarily capability-focused public profile; safety posture here is inferred from frontier-model development and launch-readiness work rather than standalone public advocacy.

David Patterson

Nationality

American

Organization

UC Berkeley / Google

Pardee Professor of Computer Science Emeritus, UC Berkeley; Distinguished Engineer, Google

BA, Mathematics — University of California, Los Angeles

MS & PhD, Computer Science — University of California, Los Angeles

Open-source hardware advocate

Focuses on hardware efficiency and open standards. Believes open-source hardware (RISC-V) is critical for democratizing computing and preventing monopolistic control of AI infrastructure.

David Saunders

Organization

Anthropic